Every team building with LLMs in 2026 faces the same question: how do we get our model to answer accurately using our data? The three main approaches — retrieval-augmented generation (RAG), fine-tuning, and long context windows — each solve a different version of that problem. Most teams are still asking "which one should I use?" when the real question is "where should my intelligence live: in model weights, in external knowledge, or both?"

After building several RAG systems and reviewing the latest research, here's my practical breakdown of where things stand and when to use what.

What RAG Actually Is

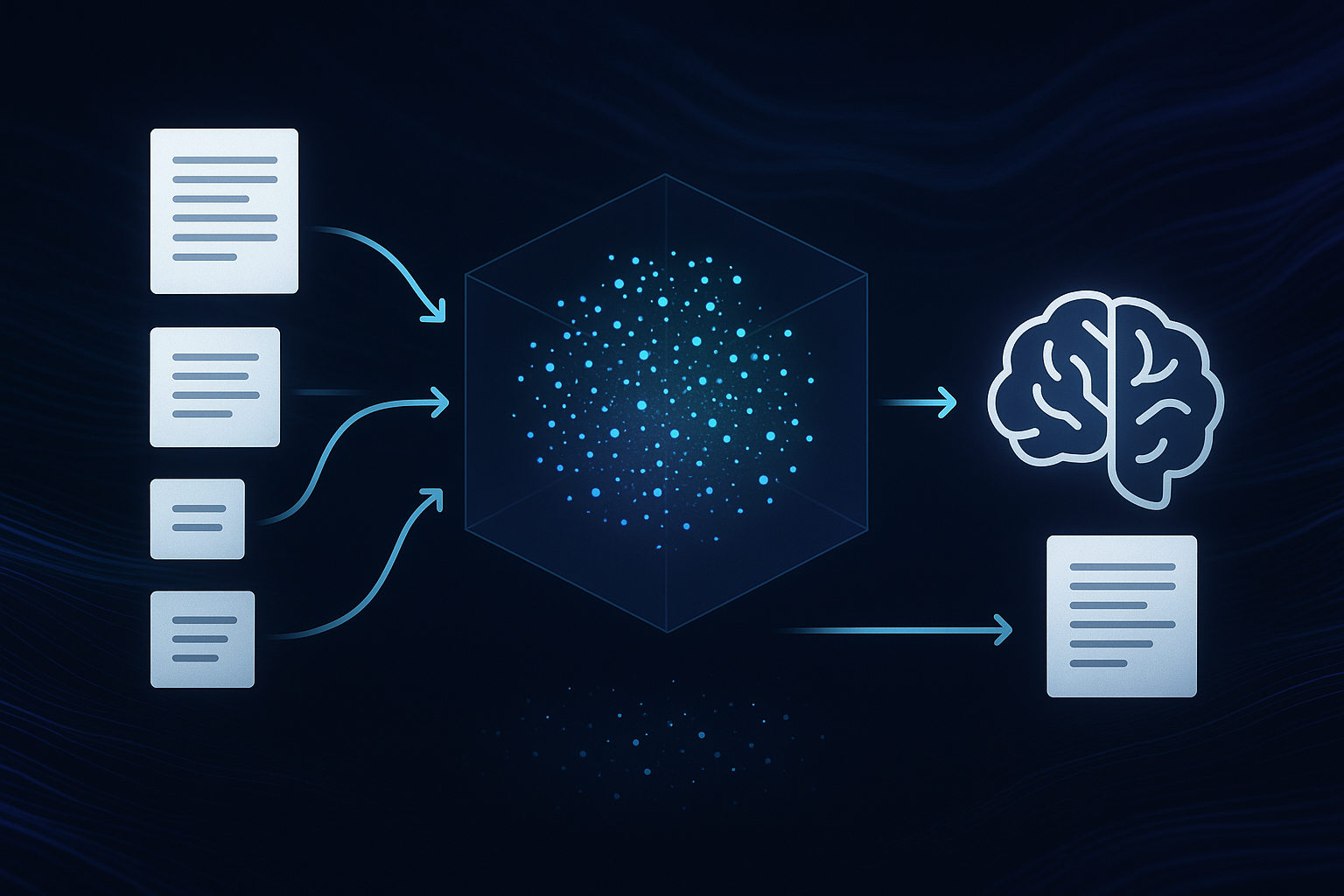

At its core, RAG is a two-step process: retrieve relevant documents from a knowledge base, then generate a response using those documents as context. The original 2020 paper by Lewis et al. proposed this as a way to give language models access to external knowledge without retraining them. Six years later, that simple idea has evolved into a family of sophisticated architectures.

The key insight remains the same: instead of expecting the model to memorize everything during training, you feed it the right information at inference time. This is why RAG dominates enterprise AI — organizational knowledge changes constantly, and retraining a model every time a policy document updates is neither practical nor cost-effective.

The Architecture Spectrum: From Naive to Agentic

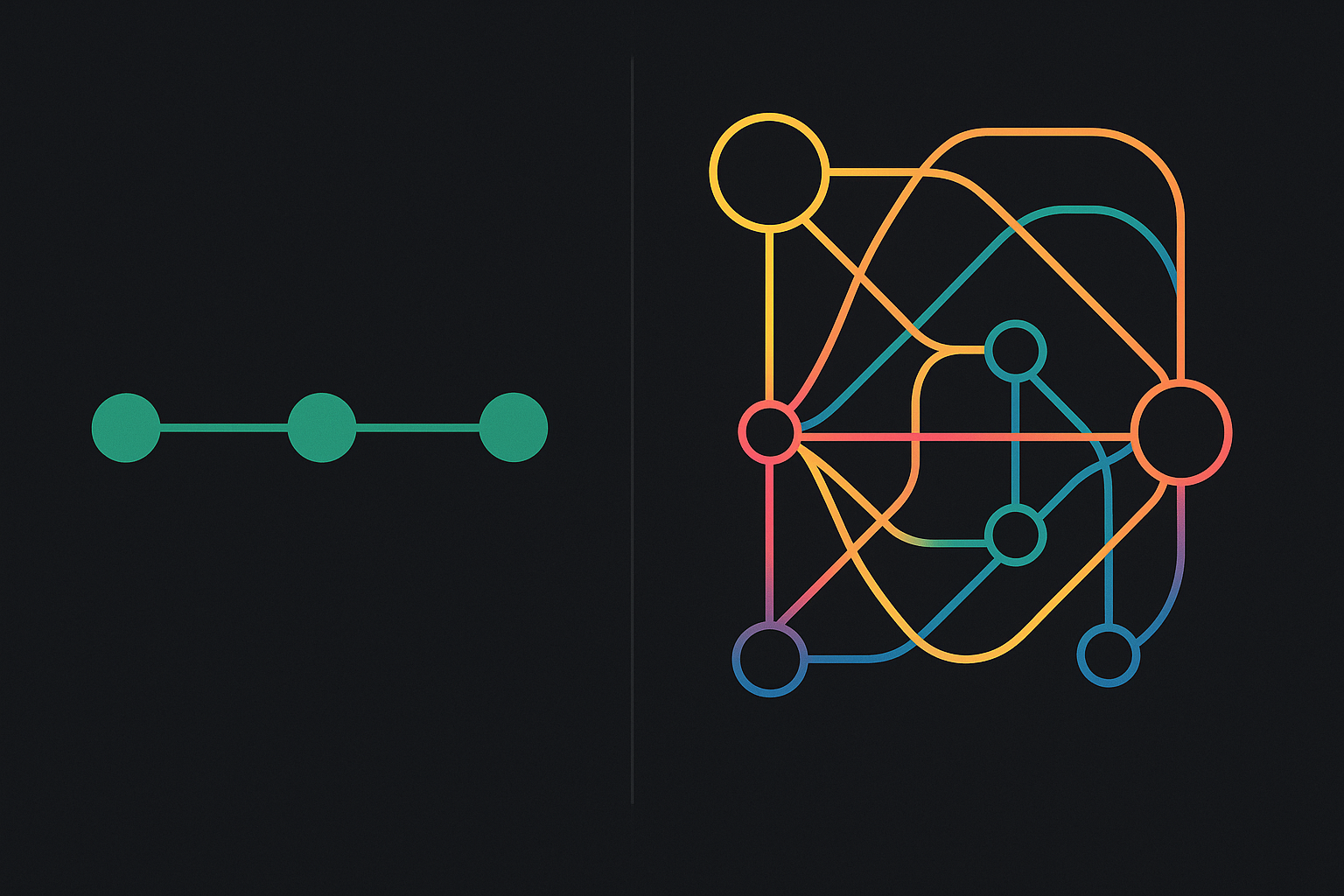

RAG architectures in 2026 fall along a spectrum of complexity:

Naive RAG

The baseline pattern: chunk your documents, embed them into a vector database, retrieve the top-k most similar chunks at query time, and pass them to the LLM as context. This is where most tutorials start and where many production systems still operate. It works surprisingly well for simple document Q&A, but breaks down when queries require multi-hop reasoning, when relevant information spans multiple documents, or when the retrieved chunks are noisy.

Advanced RAG

The current sweet spot for production systems. Advanced RAG adds layers of intelligence around the basic retrieve-generate loop:

- Query rewriting: Reformulating the user's question to improve retrieval quality (e.g., expanding acronyms, decomposing multi-part questions)

- Hybrid retrieval: Combining semantic search (dense embeddings) with lexical search (BM25/sparse) for better recall, especially on technical terms and proper nouns

- Reranking: Using a cross-encoder model to re-score retrieved chunks after initial retrieval, dramatically improving precision

- Hierarchical chunking: Approaches like RAPTOR that recursively embed, cluster, and summarize text at multiple abstraction levels for better multi-document reasoning

Agentic RAG

The frontier. Agentic RAG wraps the retrieval pipeline in an autonomous agent that can decide when to retrieve, what to retrieve, and whether the results are good enough. Two key patterns have emerged:

- Corrective RAG (CRAG): Evaluates retrieved evidence quality before generation. If the evidence is weak, it dynamically re-triggers retrieval with a reformulated query or decomposes the input into simpler sub-queries.

- Self-RAG: Incorporates self-reflection where the model decides whether it needs retrieval at all, evaluates relevance of retrieved data, and critiques its own outputs for factual grounding.

GraphRAG

The newest entrant. GraphRAG combines vector search with knowledge graphs and structured ontologies. Instead of retrieving flat text chunks, it traverses relationships between entities — answering questions like "which suppliers are affected by the new tariff policy?" by following edges in a knowledge graph rather than hoping the right paragraph appears in a vector search. Enterprise implementations report search precision as high as 99% for structured queries, though the upfront investment in graph construction is significant.

RAG vs. Fine-Tuning vs. Long Context

Here's the decision framework I use:

Use RAG when the failure mode is missing or stale knowledge. If your model gives wrong answers because it doesn't have access to the right information — company policies, product documentation, recent research — RAG is the answer. It's especially strong when data changes frequently, when you need source attribution ("this answer came from document X, page 3"), and when you're working across large document collections.

Use fine-tuning when the failure mode is behavioral. If your model has the right information but responds in the wrong format, uses the wrong tone, or can't follow domain-specific reasoning patterns, fine-tuning is the fix. With PEFT methods like LoRA and QLoRA, fine-tuning a 7B model costs $50–$500 on a GPU marketplace — it's no longer a resource-gated activity. Fine-tuning changes how the model responds; RAG changes what it responds with.

Use long context when your knowledge base is small enough to fit. If you can pass all relevant documents directly into the prompt — say, a 50-page technical spec or a codebase under 100 files — long context with prompt caching can be simpler and cheaper than building a RAG pipeline. But be aware: accuracy drops 10–20 percentage points when relevant information sits in the middle of long contexts rather than at the edges, and costs scale linearly with input tokens.

The 2026 Best Practice: Hybrid Systems

The most effective production systems in 2026 combine all three. A common pattern:

- Fine-tune the base model for task-specific behavior — response format, domain vocabulary, reasoning patterns

- Use RAG to provide relevant domain context at inference time — the fine-tuned model handles "how" to respond, while RAG handles "what" to respond with

- Leverage long context as part of the RAG pipeline — retrieve the right 20 chunks, then pass them all at once for holistic reasoning

RAG is also evolving from "retrieval-augmented generation" into something broader: a context engine. The idea is that no matter how intelligent an agent is, the quality of its decisions depends on the quality and relevance of the context it receives. Dynamically assembling the most effective context for different tasks at different moments — what some are calling "context engineering" — is becoming a core competency.

A Decision Framework for Data Scientists

Before you choose an architecture, start here:

- Start with prompting + evals. If you skip evaluation, every architecture debate is just vibes. Define what "good" looks like first.

- Add RAG before fine-tuning for knowledge-heavy tasks. It's faster to set up, easier to debug, and you can swap the underlying model without rebuilding.

- Fine-tune when behavior is the bottleneck, not missing facts. Wrong format? Fine-tune. Wrong information? RAG.

- Implement hybrid retrieval (semantic + BM25) plus reranking where quality matters. Single-method retrieval consistently underperforms.

- Track two eval layers: retrieval metrics (recall@k, MRR) and answer metrics (accuracy, faithfulness, relevance). A perfect answer built on bad retrieval is a ticking time bomb.

Practical Takeaways

- Don't over-architect. Naive RAG with a good reranker beats a complex GraphRAG pipeline with bad data. Start simple, measure, then add complexity where metrics demand it.

- Hybrid retrieval is table stakes. If you're using only dense embeddings, you're leaving 15–30% recall on the table for technical and entity-heavy queries.

- Chunking strategy matters more than model choice. How you split documents — by semantic boundary, by section, by sliding window — has an outsized impact on retrieval quality.

- Build LLM-agnostic. The most future-proof RAG systems decouple retrieval from generation. When the next model drops, you should be able to swap it in without rebuilding your pipeline.

- Long context didn't kill RAG. Benchmarks consistently show that RAG is cheaper per query, more precise in what it retrieves, and faster for large knowledge bases. Use long context for small, complete documents; use RAG for everything else.

Put volatile knowledge in retrieval. Put stable behavior in weights. Stop trying to force one tool to do both jobs.